Measuring Marketing Attribution: A Conversation with Prof. Ron Berman

Marketing has a measurement problem. Budgets are larger than ever, channels are more fragmented than ever, and yet most marketers are still making decisions based on models built for a simpler world. We sat down with Professor Ron Berman of the Wharton School at the University of Pennsylvania — one of the leading academic voices in marketing science — to cut through the noise on three of the most debated tools in the field: Multi-Touch Attribution (MTA), Marketing Mix Modeling (MMM), and A/B Testing.

The Core Challenge: You Can't Manage What You Can't Measure

Before diving into the tools, Prof. Berman framed the fundamental tension every marketing leader faces today. Modern customer journeys span dozens of touchpoints — a Google search, a LinkedIn ad, a podcast mention, a retargeted display ad, a direct visit. Each channel wants credit. Each vendor claims their platform drove the conversion. The result is a measurement ecosystem where the numbers often add up to more than 100% — a statistical impossibility that signals something is deeply broken."The question isn't which model is right," Prof. Berman noted. "It's which model is right for your business — and most companies haven't asked that question carefully enough."

Multi-Touch Attribution (MTA): Powerful, but Narrow

MTA attempts to assign fractional credit to each touchpoint in a customer's path to conversion. Last-click, first-click, linear, time-decay, data-driven — the variations are endless, and the debates around them are fierce.

Where MTA works well:

- Purely digital funnels where every touchpoint is tracked

- Short sales cycles (e-commerce, lead gen, app installs)

- Environments with strong identity resolution (logged-in users)

Where MTA breaks down:

- It is blind to offline channels — TV, radio, out-of-home, word of mouth

- Privacy changes (iOS 14+, cookie deprecation) have severely degraded signal quality

- It tells you what happened, but not why — correlation masquerading as causation

Prof. Berman was direct on this point: MTA is a useful operational tool, but it should never be the sole basis for budget allocation decisions. Over-relying on it systematically under-funds upper-funnel and brand channels — which don't show up cleanly in click paths — and over-rewards retargeting, which often takes credit for conversions that would have happened anyway.

Marketing Mix Modeling (MMM): The Comeback Kid

MMM, once considered outdated, has experienced a dramatic renaissance — driven in part by the privacy-driven collapse of user-level tracking and in part by major platforms like Google and Meta releasing their own open-source MMM frameworks (Meridian and Robyn, respectively).MMM takes an aggregate, econometric approach. It uses historical spend and sales data across channels to statistically estimate the contribution of each marketing activity, while controlling for external factors like seasonality, pricing, and macroeconomic conditions.

MMM's key strengths:

- Works across all channels, including offline and brand

- Privacy-safe by design — no individual user data required

- Captures long-term brand-building effects that MTA misses entirely

- Can model diminishing returns and saturation curves for budget optimization

The honest limitations:

- Requires significant data history — typically 2+ years of weekly data for reliable outputs

- Operates at an aggregate level and cannot inform real-time, campaign-level decisions

- Model quality is highly sensitive to data quality and the assumptions built in

The key insight from Prof. Berman: MMM tells you what should work at a strategic level. It is a budget planning tool, not an execution tool. Companies that use it to set channel mix and then use MTA to optimize within channels are getting the best of both worlds.

A/B Testing: The Gold Standard with Real Constraints

If MMM is the strategist and MTA is the tactician, A/B testing is the scientist. Randomized controlled experiments are the only methodology that can establish true causal lift — not just correlation.

When A/B testing is the right call:

- Testing creative, messaging, landing pages, pricing, or product features

- Geo-based holdout tests to measure the true incremental impact of a campaign

- Platform-level ghost bidding experiments to verify vendor-reported lift

The real-world constraints Prof. Berman raised:

- Scale requirements: You need sufficient traffic volume for statistical significance. Small brands simply cannot run clean experiments across all channels simultaneously.

- Time requirements: Many tests need weeks or months to reach significance — too slow for fast-moving campaign decisions.

- Novelty effects: Short-term test results can be misleading if consumer behavior changes once a campaign scales up.

- Ethical and business constraints: Withholding treatment from a control group has real costs — revenue foregone, customers underserved.

The practical takeaway: run experiments where you can, but be honest about where you cannot. An untested assumption fed into an MMM is not automatically worse than a noisy A/B result from an underpowered test.

What This Means for Marketing Leaders in 2026

Prof. Berman closed with a note that felt especially relevant for the B2B and education marketing space: measurement maturity is a competitive advantage. As privacy regulations tighten and third-party signals continue to erode, organizations that have invested in first-party data infrastructure, clean MMM inputs, and a culture of experimentation will have a structural edge over those still relying on platform-reported ROAS. The roadmap is not complicated — but it does require commitment:

- Audit your current measurement stack. Understand what each tool is actually measuring and what it cannot see.

- Invest in data infrastructure before models. A sophisticated model on dirty data produces confidently wrong answers.

- Build a culture of calibration. Use A/B tests to validate your MMM outputs. Use MMM to contextualize your MTA signals. Let the models talk to each other.

- Partner with academics. The gap between academic marketing science and practitioner marketing is closing — and those who bridge it first will win.

You May Also Like

These Related Stories

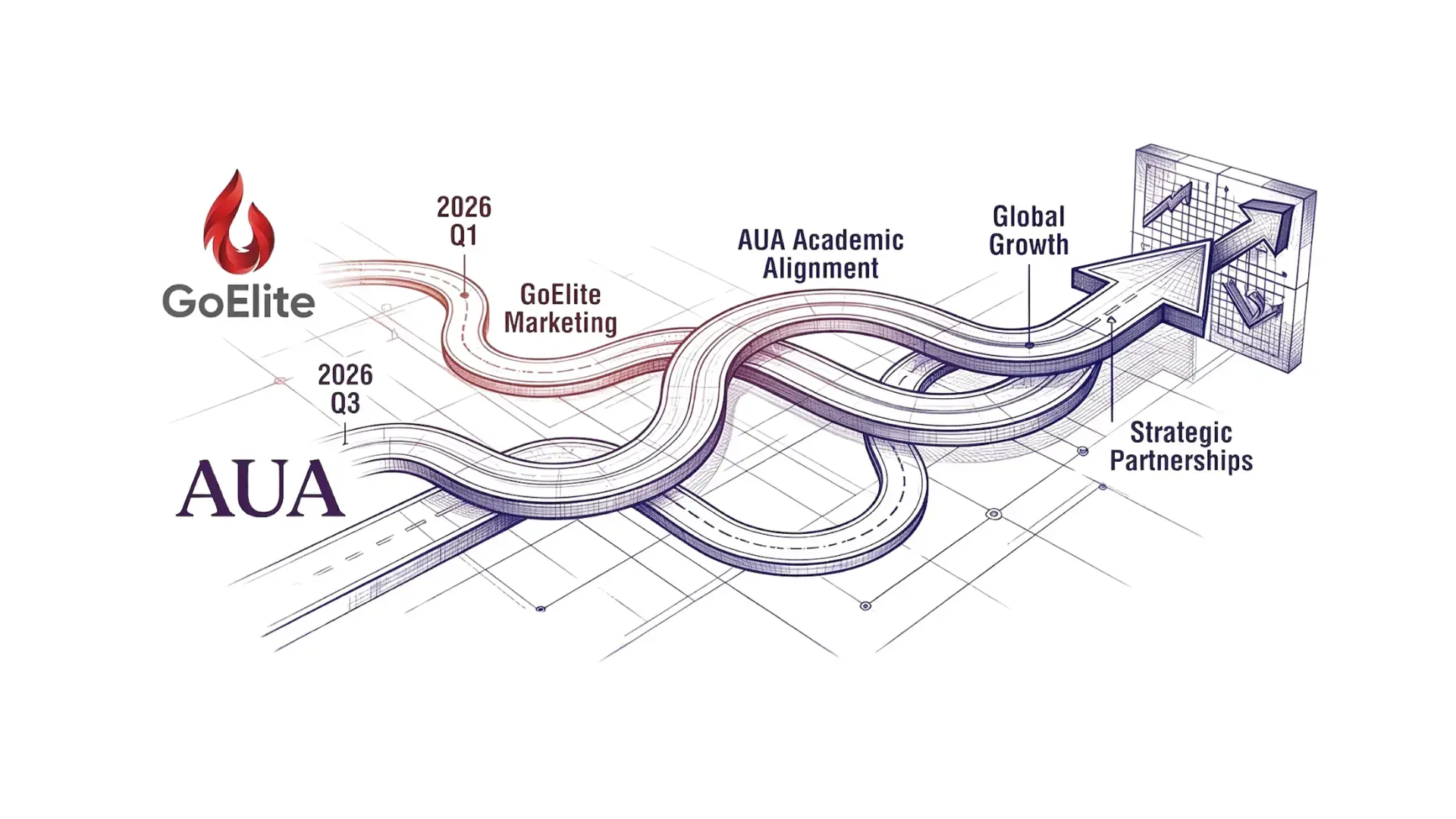

Strategic Synergy: GoElite & AUA Align on 2026 Marketing Growth Plans

GoElite at INBOUND 25: AI, Marketing, and Team Bonding

-1.jpg?width=600&height=1000&name=%E9%87%8E%E7%81%AB%E4%B8%8A%E5%B2%B8%E7%BE%A4%E4%BA%8C%E7%BB%B4%E7%A0%81%20(600%20x%201800%20px)-1.jpg)

No Comments Yet

Let us know what you think